As we look forward to continued growth for the XPRTs in 2025, it’s also a fitting time to take stock of just how much their reach has already grown around the globe. In the marketing world, reach is often defined as the size of the audience that sees and/or engages with your content. We track XPRT reach with several metrics—including completed test runs, benchmark downloads, and mentions of the XPRTs in advertisements, articles, and tech reviews. Gathering this information gives us insight into how many people are using the XPRTs, and it provides a sense of the impact the XPRTs are having around the world. It also helps us understand the needs of those who use them.

From time to time, we publish an updated version of an “XPRTs around the world” infographic, which features highlights from the reach metrics we track. This week, we published a new version of the infographic that includes the following highlights:

- Over 4,100 unique sites have collectively mentioned the XPRTs more than 20,500 times.

- Those mentions include more than 12,900 tech articles and reviews.

- XPRT tech press mentions and test runs have originated in over 983 cities located in 84 countries on six continents. New cities of note include San Salvador, El Salvador; Salamanca, Mexico; Fes, Morocco; Wanaka, New Zealand; and Luzern, Switzerland.

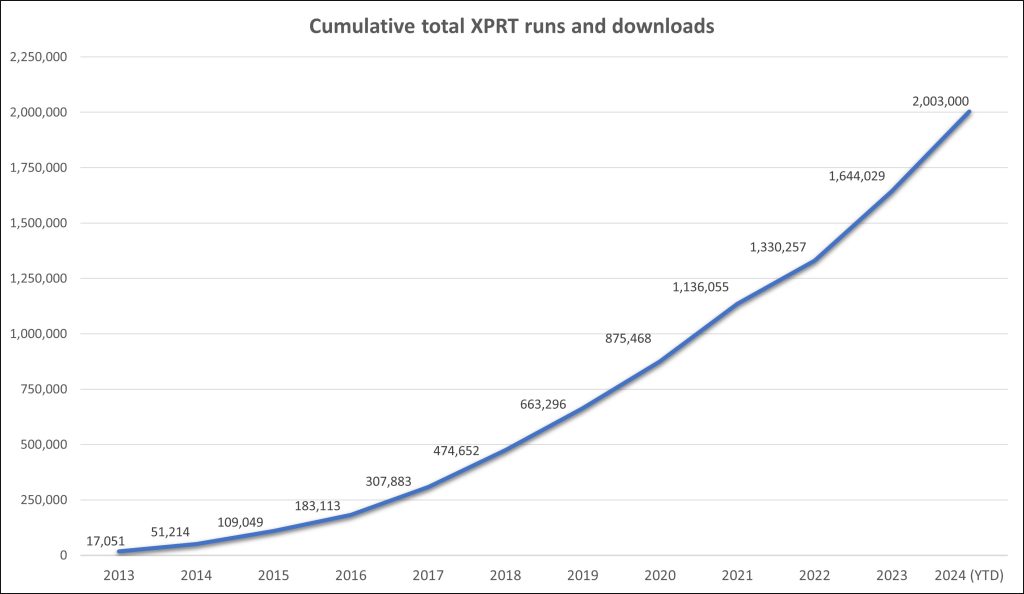

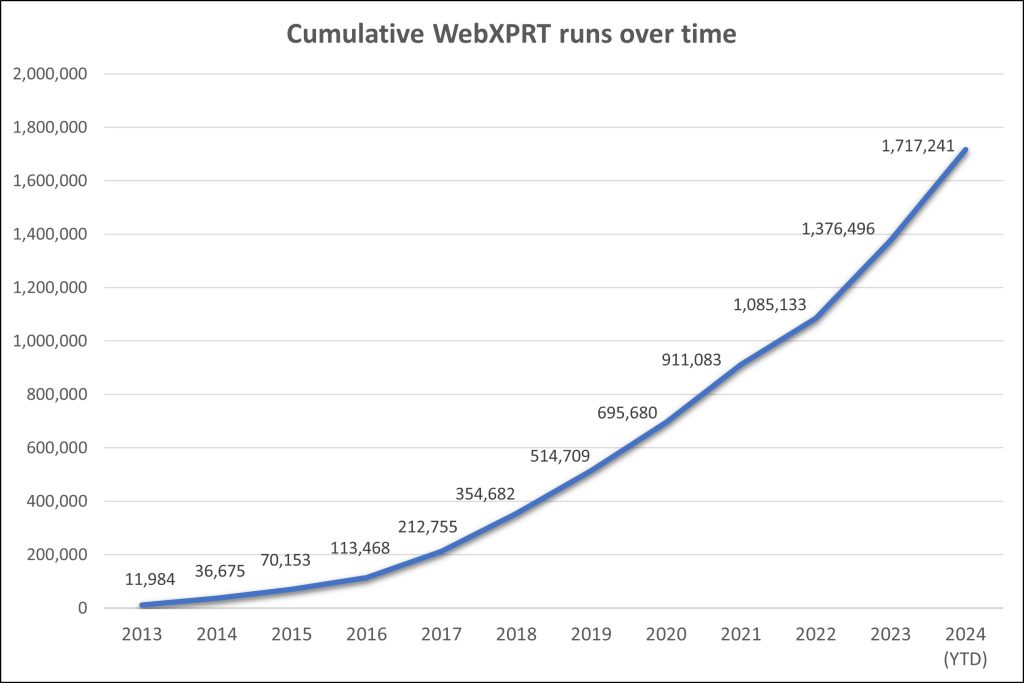

In addition to the reach metrics we mention above, the XPRTs have now delivered more than 2,020,000 real-world results! We’re grateful for everyone who’s used the XPRTs and has spread the word to others. Your active involvement makes it possible for us to achieve our overall goals: to provide benchmark tools that are reliable, relevant, free, and simple to use.

Justin