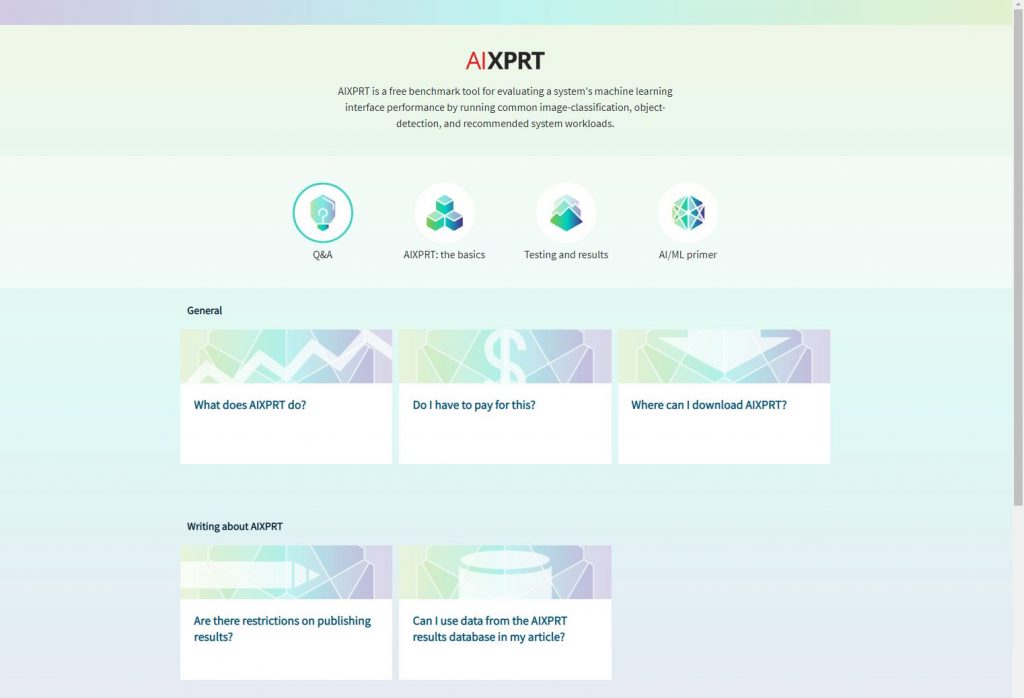

We’re happy to announce that the AIXPRT learning tool is now live! We designed the tool to serve as an information hub for common AIXPRT topics and questions, and to help tech journalists, OEM lab engineers, and everyone who is interested in AIXPRT find the answers they need in as little time as possible.

The tool features four primary areas of content:

- The Q&A section provides quick answers to the questions we receive most from testers and the tech press.

- The AIXPRT: the basics section describes specific topics such as the benchmark’s toolkits, networks, workloads, and hardware and software requirements.

- The testing and results section covers the testing process, metrics, and how to publish results.

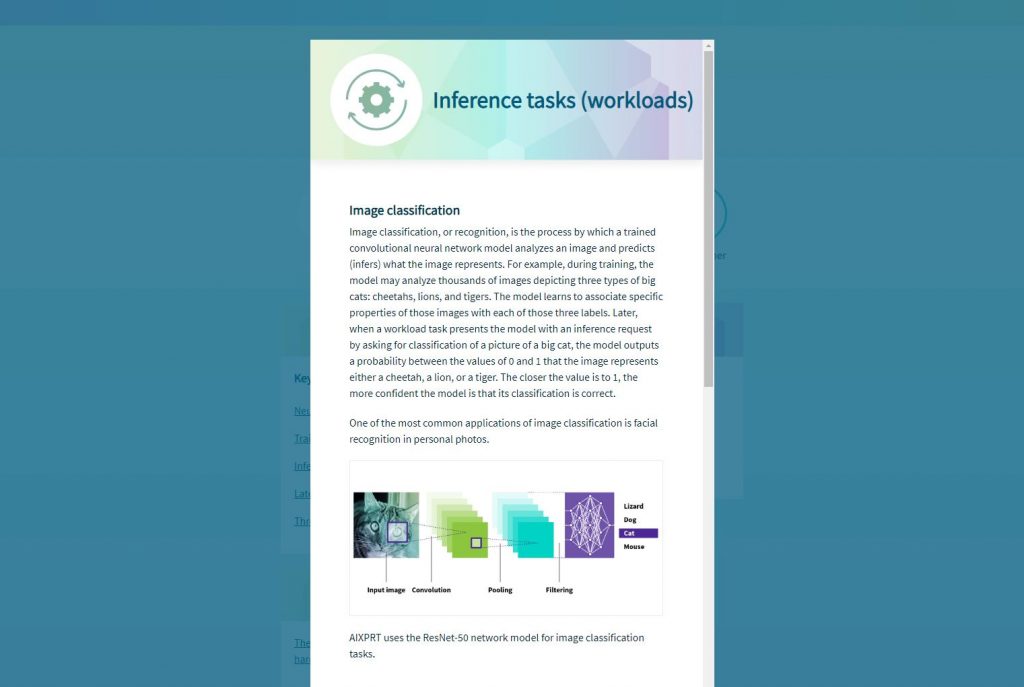

- The AI/ML primer provides brief, easy-to-understand definitions of key AI and ML terms and concepts for those who want to learn more about the subject.

The first screenshot below shows the home screen. To show how some of the popup information sections appear, the second screenshot shows the Inference tasks (workloads) entry in the AI/ML Primer section.

We’re excited about the new AIXPRT learning tool, and we’re also happy to report that we’re working on a version of the tool for CloudXPRT. We hope to make the CloudXPRT tool available early next year, and we’ll post more information in the blog as we get closer to taking it live.

If you have any questions about the tool, please let us know!

Justin