We’re happy to announce that AIXPRT is now available to the public! AIXPRT includes support for the Intel OpenVINO, TensorFlow, and NVIDIA TensorRT toolkits to run image-classification and object-detection workloads with the ResNet-50 and SSD-MobileNet v1networks, as well as a Wide and Deep recommender system workload with the Apache MXNet toolkit. The test reports FP32, FP16, and INT8 levels of precision.

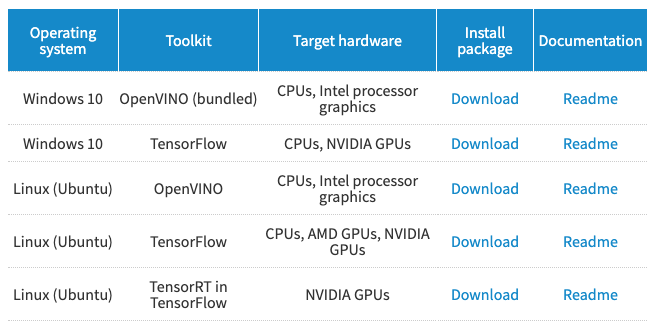

To access AIXPRT, visit the AIXPRT download page. There, a download table displays the AIXPRT test packages. Locate the operating system and toolkit you wish to test and click the corresponding Download link. For detailed installation instructions and information on hardware and software requirements for each package, click the package’s Readme link. If you’re not sure which AIXPRT package to choose, the AIXPRT package selector tool will help to guide you through the selection process.

In addition, the Helpful Info box on AIXPRT.com contains links to a repository of AIXPRT resources, as well links to XPRT blog discussions about key AIXPRT test configuration settings such as batch size and precision.

We hope AIXPRT will prove to be a valuable tool for you, and we’re thankful for all the input we received during the preview period! If you have any questions about AIXPRT, please let us know.