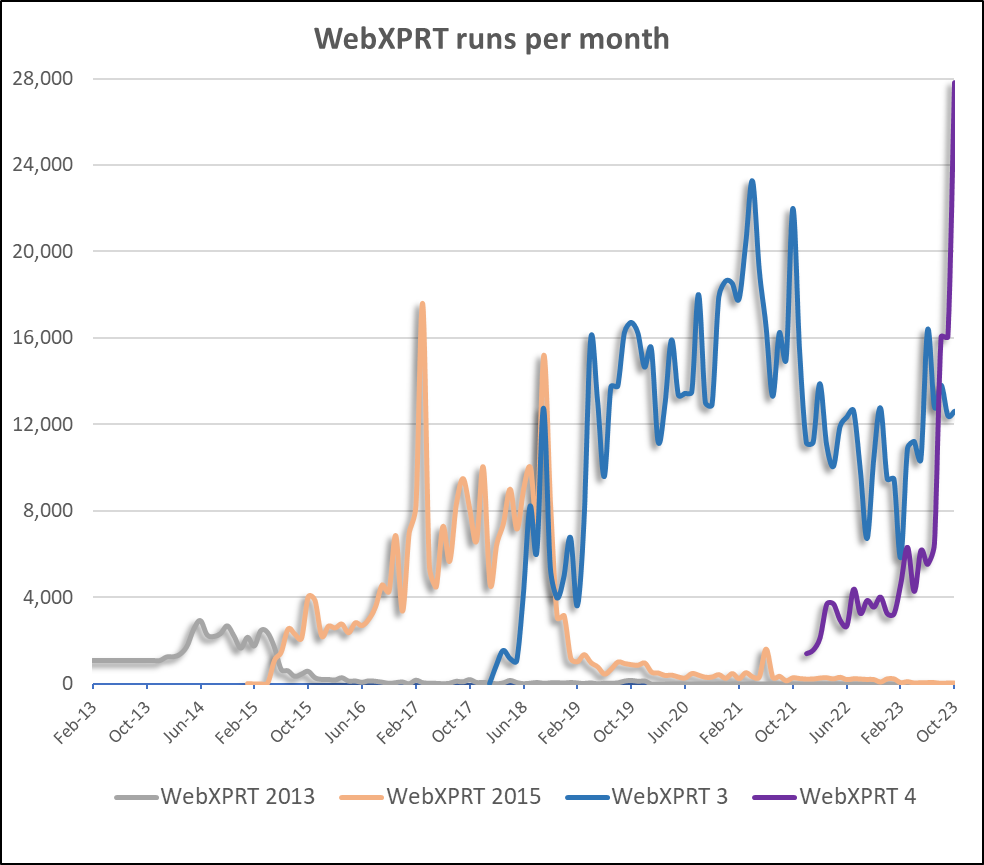

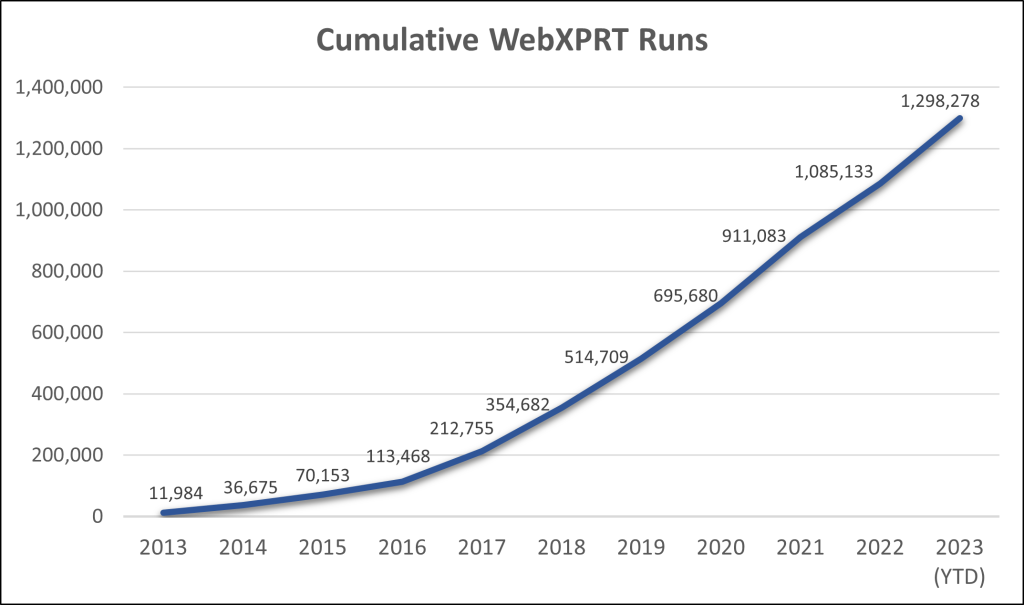

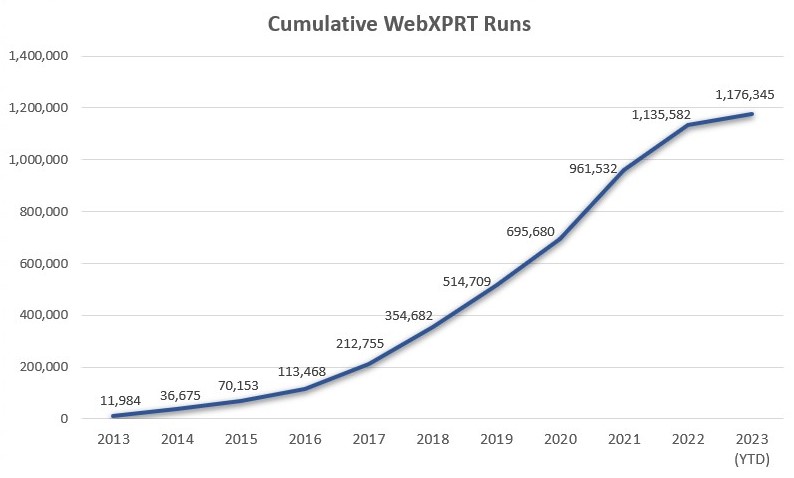

We’re excited to announce that WebXPRT 5 is officially on the way! Since we launched WebXPRT 4 in February 2022, it’s proven to be an exceptionally successful and reliable go-to benchmark for OEM labs, the tech press, and individual users alike—to the tune of over 644,000 runs to date. In past blog posts, we’ve discussed new features and possible auxiliary workloads that we contemplated adding to WebXPRT 4. As we’ve considered user comments and suggestions, changes in web technology, and how we can best position WebXPRT as a relevant browser benchmark in the future, however, it became clear that it was time for an all-new WebXPRT.

Now that we’ve announced WebXPRT 5, the first question for many existing WebXPRT users may be, “When will WebXPRT 5 be available?” We’re not yet ready to share an anticipated WebXPRT 5 release date, but we can share that a lot of groundwork is already complete, and the remaining work is moving along rapidly. We’ll continue to issue updates here in the blog as we reach important milestones.

The second question for many existing WebXPRT users may be, “How will WebXPRT change?” We’re not yet ready to share extensive details about WebXPRT 5’s workloads—rest assured that we will as soon as we can firm up everything—but we can share a few key guidelines we tried to follow in our WebXPRT 5 design. Each of these points of emphasis is a result of feedback we’ve received from labs, as well as features that users have asked for.

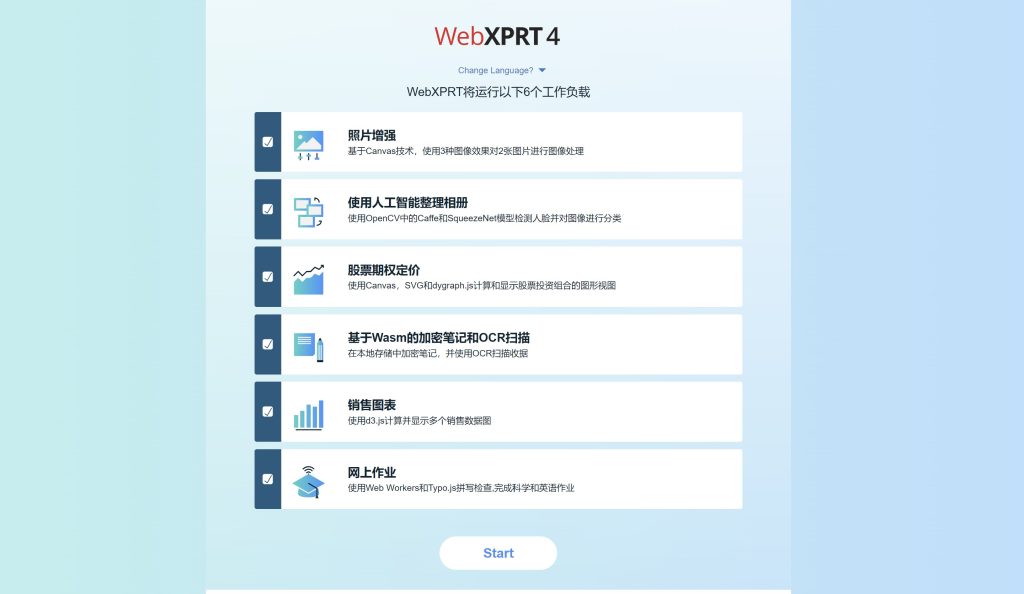

- Provide more AI-related workloads. In past blog posts, we’ve discussed the growing importance of local, browser-side AI. WebXPRT 4 already includes timed AI tasks in two of its workloads: the Organize Album using AI workload and the Encrypt Notes and OCR Scan workload. We’re working on ways to expand WebXPRT’s AI portfolio in the next version.

- Add WebGPU workloads. As a web API, WebGPU enables web-based applications—such as image-based GenAI and inference workloads—to directly access the graphics rendering and computational capabilities of a system’s GPU. We hope to incorporate WebGPU measures in WebXPRT 5.

- Improve WebXPRT’s utility as a tool for test labs, publications, and engineering analysis.

- Update the workloads with longer operations. Many of WebXPRT’s existing workloads no longer challenge cutting-edge consumer hardware as much as many of us would like. Testing labs have asked for longer and more demanding workloads. We’re working on incorporating workloads that are accessible enough to be run by a broad range of devices yet challenging enough to allow performance differentiation among high-end systems.

- Enable more precise performance measures. Labs and testers have also asked for more granular insight into the workloads to help with engineering-level performance analysis. Currently, some WebXPRT 4 workload scores include multiple timed tasks. If we separate those compound scores so that each workload reports results from only one timed task, users will be able to more precisely assess how well a device performs while handling specific operations. We’re looking into this approach.

- Modernize the harness to make it more flexible and to speed future work. WebXPRT 4’s current harness works with server-side sessions on a LAMP (Linux, Apache, MySQL and PHP) stack. If we implement the harness via JavaScript on the client side, it will pave the way for faster development and testing cycles in the future.

We expect WebXPRT 5 to carry on the WebXPRT legacy of reliability and real-world relevance, while providing users with compelling new workloads and features. As has been our habit with new benchmark releases, however, we won’t force anyone to change versions anytime soon. Instead, we will continue to make WebXPRT 4 available for quite some time after WebXPRT 5 goes live.

If you have any questions or comments about WebXPRT, please let us know!

Justin