One way we assess the XPRTs’ ongoing effectiveness is to regularly track the reach of our benchmarks in the global tech press. If tech journalists decide to include an XPRT benchmark in their suite of “go-to” performance evaluation tools, we know that decision reflects a high degree of confidence in the relevance and reliability of our benchmarks. It’s especially exciting for us to see the XPRTs win the trust of more tech press outlets in an ever-increasing number of countries around the world.

Because some of our newer readers may be unaware of the wide variety of tech press outlets that use the XPRTs, we occasionally like to share an overview of recent XPRT-related global tech press activity. For today’s blog, we want to give readers a sampling of the press mentions we’ve seen over the past few months.

Recent mentions include:

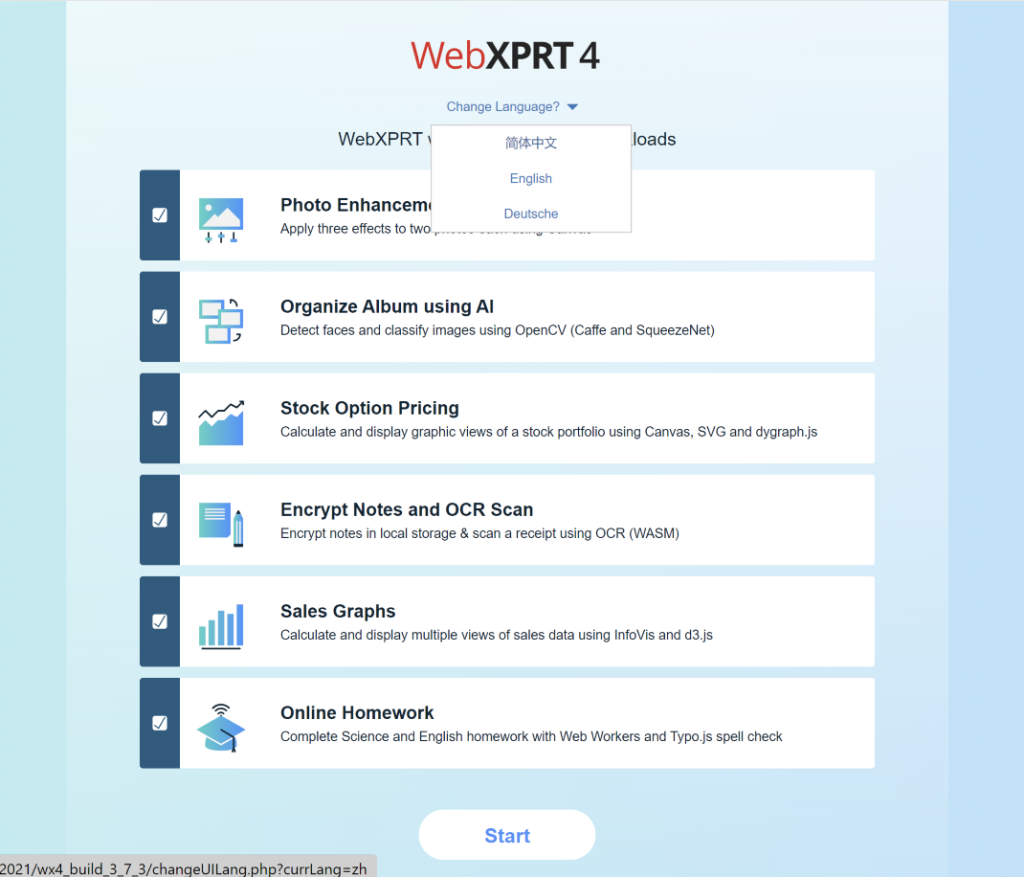

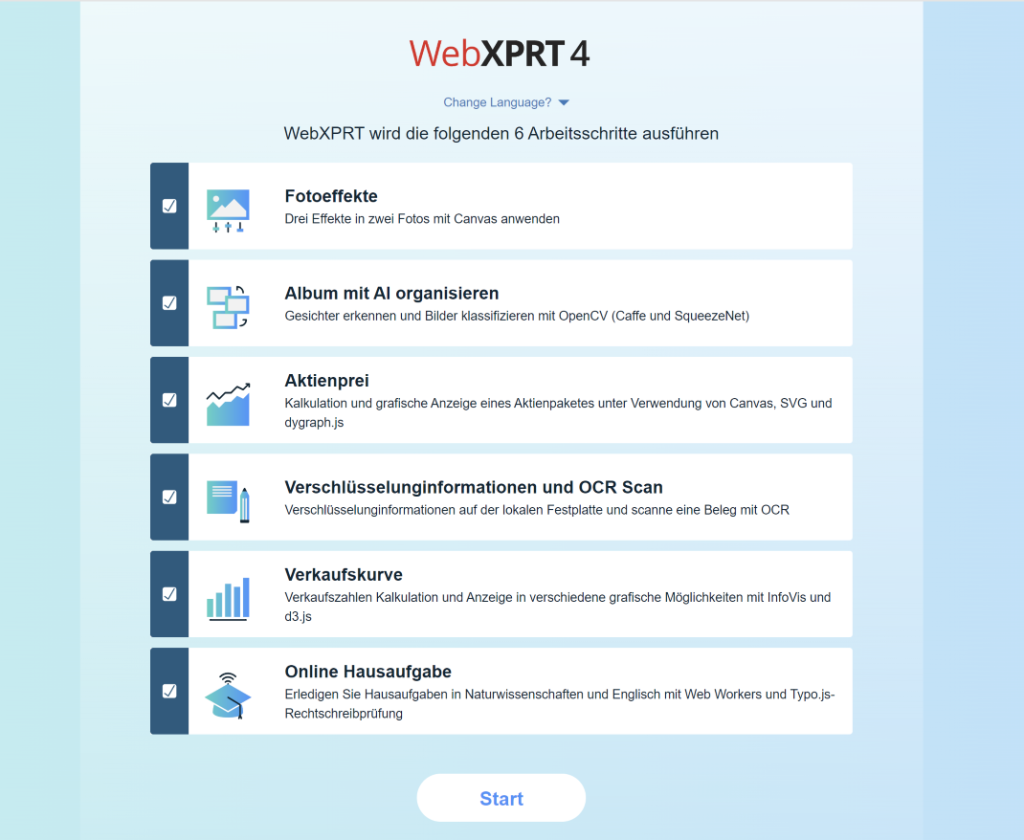

- Android Authority used WebXPRT in an article discussing the performance and features of Google Chrome and Microsoft Edge.

- Mashable used CrXPRT 2 to measure the battery life of a range of laptops for an article titled Best cheap laptops for 2024: Every model under $1,000 that we’ve tested and loved.

- Notebookcheck used WebXPRT 4 in dozens of device reviews, including evaluations of the Acer Swift 14 AI, Apple iPhone 16 Pro Max, ASUS ZenBook S 14, HP OmniBook Ultra 14, Lenovo ThinkPad P1 Gen 7, and Samsung Galaxy Z Fold6.

- PCWorld referenced CrXPRT 2 in an article titled Best Chromebooks 2024: Best overall, best battery life, and more.

- Tom’s Hardware used WebXPRT 4 to evaluate the performance of the Intel Core Ultra 9 285K.

- Other outlets that have published articles, ads, or reviews mentioning the XPRTs in the last few months include: 3DNews (Russia), Acer, Alza.cz (Czech Republic), Benchlife.info, Benchmark.best, Computer Base (Germany), eTeknix, Gadgety (Israel), Intel, ITC.ua (Ukraine), Laptop Mag, Mashable, PC Games Hardware (Germany), PCWorld, QQ.com (China), Sohu.com (China), TechRadar, Tool Elvaliant (Italy), and Tweakers.

If you’d like to receive monthly updates on XPRT news, we encourage you to sign up for the BenchmarkXPRT Development Community newsletter. Each month, the newsletter delivers a summary of the previous month’s XPRT-related activity, including XPRT blog posts and new mentions of the XPRTs in the tech press. If you don’t currently receive the monthly BenchmarkXPRT newsletter but would like to join the mailing list, please let us know! It’s free to join. We won’t publish, share, or sell any of the contact information you provide, and we’ll send you only the monthly newsletter and occasional benchmark-related announcements, such as news about patches or new releases.

If you have any questions about the XPRTs, suggestions for improvement, or requests for future blogs, please just contact us.

Justin