The big day has finally arrived—WebXPRT 5 is now available!

You can access the benchmark at WebXPRT.com or WebXPRT5.com. For longtime WebXPRT users, the WebXPRT 5 UI will have an all-new look but a very familiar feel. The general process for kicking off both manual and automated tests is the same as with WebXPRT 4, so the transition to WebXPRT 5 testing will be straightforward. For legacy testing purposes, we will continue to make WebXPRT 4 available on our site.

Here is a quick overview of the differences between WebXPRT 4 and WebXPRT 5:

General changes

- We’ve updated the aesthetics of the WebXPRT UI to make WebXPRT 5 visually distinct from older versions. We did not significantly change the flow of the UI.

- We’ve updated content in some of the workloads to reflect changes in everyday technology, such as upgrading most of the photos in the photo processing workloads to higher resolutions.

- We’ve updated the base calibration system for score calculations and adjusted the scoring scale. WebXPRT 5 scores will be in a lower numerical range than WebXPRT 4 scores. You should not compare these results to scores from previous versions of WebXPRT.

The workloads

WebXPRT 5 includes the following seven workloads:

- Video Background Blur with AI. Blurs the background of a video call using an AI-powered segmentation model.

- Photo Effects. Applies a filter to six photos using the Canvas API.

- Detect Faces with AI. Detects faces and organizes photos in an album using computer vision (OpenCV.js with Caffe Model).

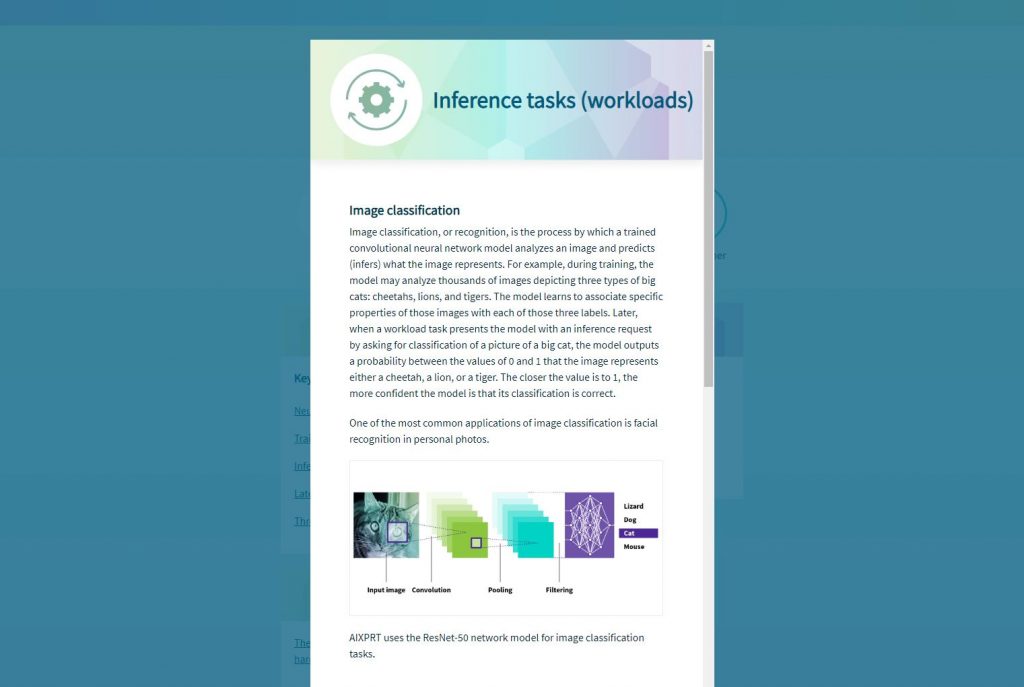

- Image Classification with AI. Labels images in an album using machine learning (OpenCV.js and ML Classify with the SqueezeNet model).

- Document Scan with AI. Scans a document image and converts it to text using ML-based OCR (Wasm with LSTM).

- School Science Project. Processes a DNA sequencing task using Regex and String manipulation.

- Homework Spellcheck. Spellchecks a document using Typo.js and Web Workers.

We’re thankful for all of the feedback we received during the WebXPRT 5 development process and Preview period, and we look forward to seeing your WebXPRT 5 results. If you have any questions about WebXPRT, please feel free to contact us!

Justin