In last week’s AIXPRT Community Preview 3 announcement, we mentioned the new public GitHub repository that we’re using to publish AIXPRT-related information and resources. In addition to the installation readmes for each AIXPRT installation package, the repository contains a selection of alternative test config files that testers can use to quickly and easily change a test’s parameters.

As we discussed in previous blog entries about batch size, levels of precision, and number of concurrent instances, AIXPRT testers can adjust each of these key variables by editing the JSON file in the AIXPRT/Config directory. While the process is straightforward, editing each of the variables in a config file can take some time, and testers don’t always know the appropriate values for their system. To address both of these issues, we are offering a selection of alternative config files that testers can download and drop into the AIXPRT/Config directory.

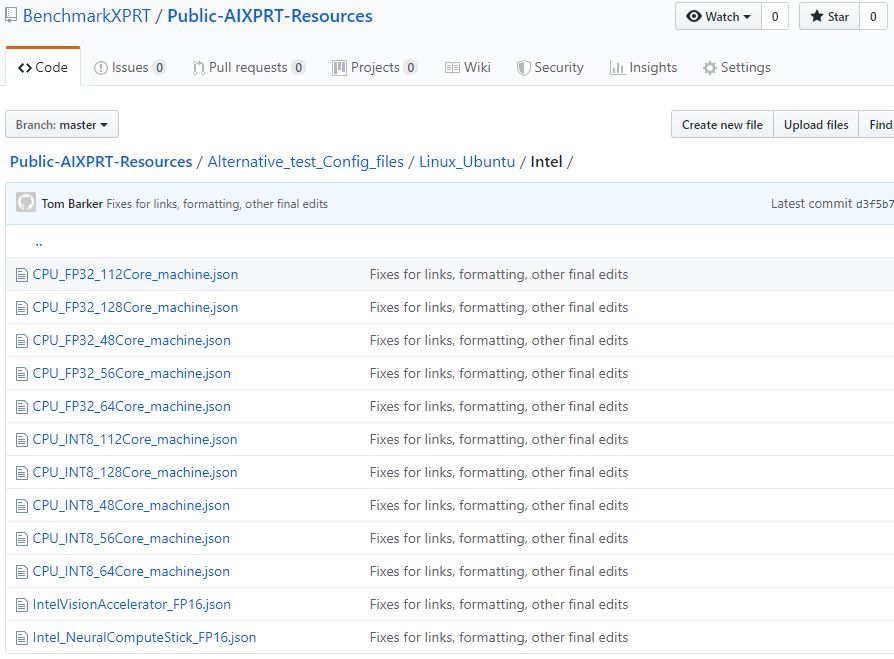

In the GitHub repository, we’ve organized the available config files first by operating system (Linux_Ubuntu and Windows) and then by vendor (All, Intel, and NVIDIA). Within each section, testers will find preconfigured JSON files set up for several scenarios, such as running with multiple concurrent instances on a system’s CPU or GPU, running with FP32 precision instead of FP16, etc. The picture below shows the preconfigured files that are currently available for systems running Ubuntu on Intel hardware.

Because potential AIXPRT use cases cut across a wide range of hardware segments, including desktops, edge devices, and servers, not all AIXPRT workloads and configs will be applicable to each segment. As we move towards the AIXPRT GA, we’re working to find the best way to parse out these distinctions and communicate them to end users. In many cases, the ideal combination of test configuration variables remains an open question for ongoing research. However, we hope the alternative configuration files will help by giving testers a starting place.

If you experiment with an alternative test configuration file, please note that it should replace the existing default config file. If more than one config file is present, AIXPRT will run all the configurations and generate a separate result for each. More information about the config files and detailed instructions for how to handle the files are available in the EditConfig.md document in the public repository.

We’ll continue to keep everyone up to date with AIXPRT news here in the blog. If you have any questions or comments, please let us know.

Justin