In case you missed the announcement, WebXPRT 3 is now live! Please try it out, submit your test results, and feel free to send us your questions or comments.

During the final push toward launch day, it occurred to us that not all of our readers are aware of the steps involved in preparing a benchmark for general availability (GA). Here’s a quick overview of what we did over the last several weeks to prepare for the WebXPRT 3 release, a process that follows the same approach we use for all new XPRTs.

After releasing the community preview (CP), we started on the final build. During this time, we incorporated features that we were not able to include in the CP and fixed a few outstanding issues. Because we always try to make sure that CP results are comparable to eventual GA results, these issues rarely involve the workloads themselves or anything that affects scoring. In the case of WebXPRT 3, the end-of-test results submission form was not fully functional in the CP, so we finished making it ready for prime time.

The period between CP and GA releases is also a time to incorporate any feedback we get from the community during initial testing. One of the benefits of membership in the BenchmarkXPRT Development Community is access to pre-release versions of new benchmarks, along with an opportunity to make your voice heard during the development process.

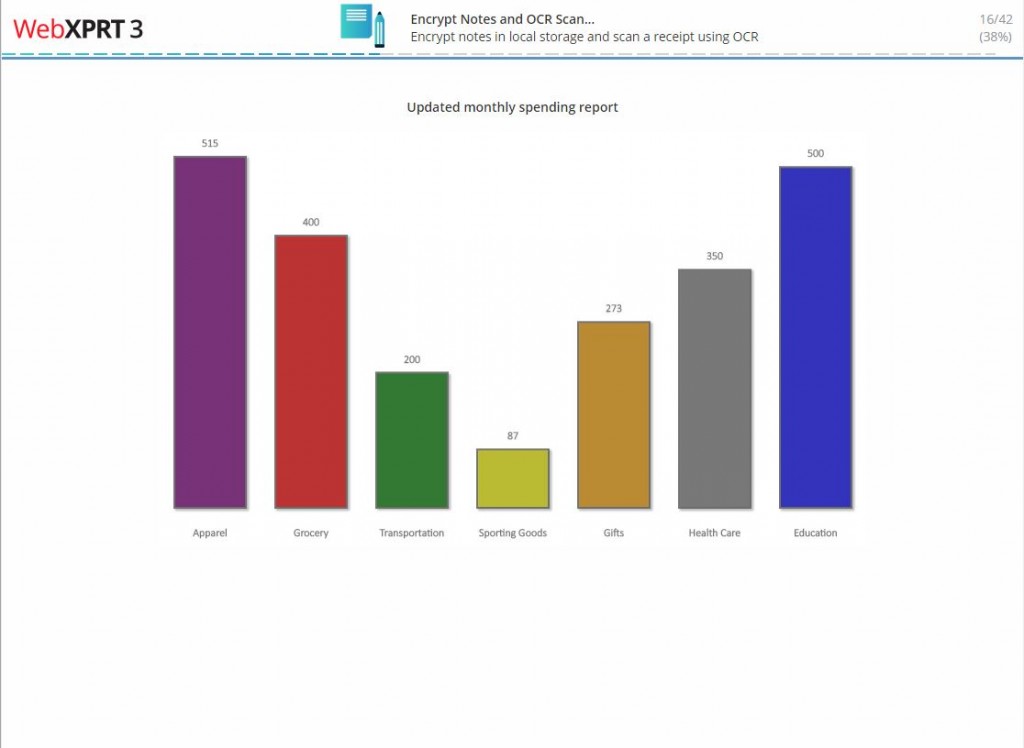

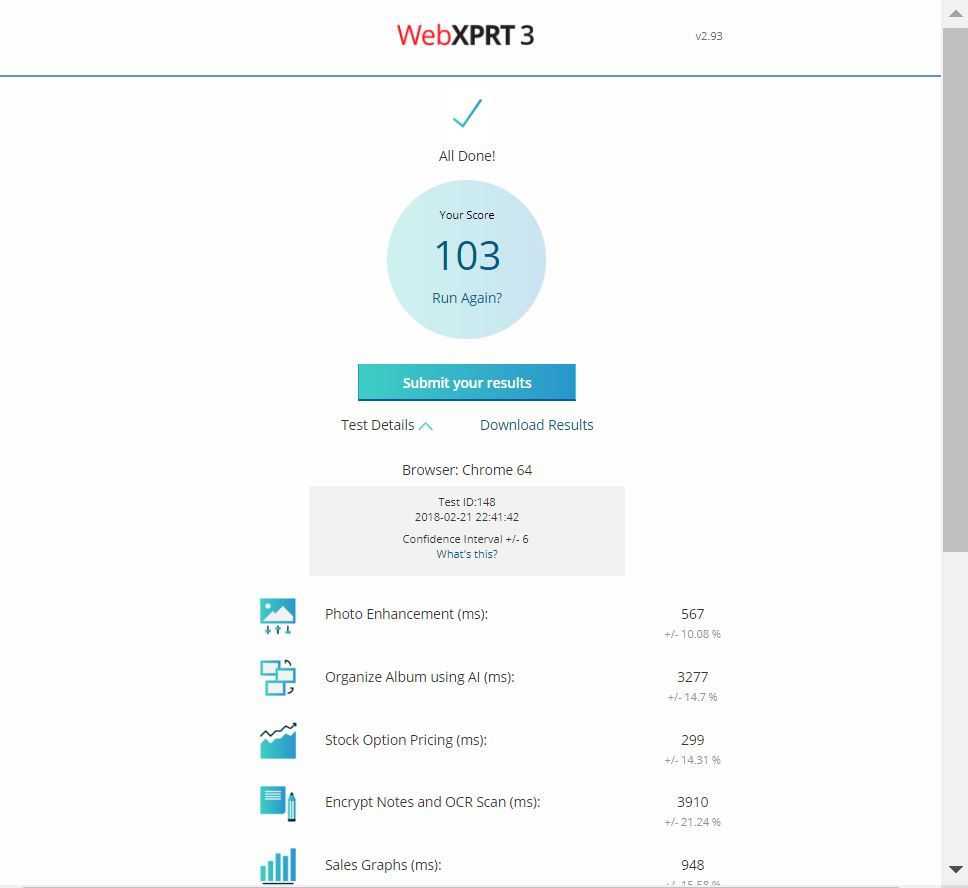

When the GA candidate build is ready, we begin two types of extensive testing. First, our quality assurance (QA) team performs a thorough review, running the build on numerous devices. In the case of WebXPRT, it also involves testing with multiple browsers. The QA team also keeps a sharp eye out for formatting problems and bugs.

The second type of testing involves comparing the current version of the benchmark with prior versions. We tested WebXPRT 3 on almost 100 devices. While WebXPRT 2015 and WebXPRT 3 scores are not directly comparable, we normalize scores for both sets of results and check that device performance is scaling in the same way. If it isn’t, we need to determine why not.

Finally, after testing is complete and the new build is ready, we finalize all related documentation and tie the various pieces together on the web site. This involves updating the main benchmark page and graphics, the FAQ page, the results tables, and the members’ area.

That’s just a brief summary of what we’ve been up to with WebXPRT in the last few weeks. If you have any questions about the XPRTs or the development community, feel free to ask!

Justin