Here at the XPRTs, our primary goal is to provide free, easy-to-use benchmark tools that can help everyone—from OEM labs to tech press journalists to individual consumers—understand how well devices will perform while completing everyday computing tasks. We track progress toward that goal in several ways, but one of the most important is how much people use and discuss the XPRTs. When the name of one of our apps appears in an ad, article, or tech review, we call it a “mention.” Tracking mentions helps us gauge our reach.

We occasionally like to share a sample of recent XPRT mentions here in the blog. If you just started following the XPRTs, it may be surprising to see our program’s global reach. If you’re a longtime reader and you’re used to seeing WebXPRT or CrXPRT in major tech press articles, it may be surprising to learn more about overseas tech press publications or see how some government agencies use the XPRTs to make decisions. In any case, we hope you’ll enjoy exploring the links below!

Recent mentions include:

- Computerworld noted that the Polish government’s Ministry of Digital Affairs used WebXPRT to establish a minimum performance baseline for Chromebooks that could be eligible for their Laptops for Teachers program.

- Tom’s Guide used WebXPRT 4 in an article titled Best AI laptop for 2025—tested and rated and reviews of the Acer Chromebook Plus 515 and Samsung Galaxy Chromebook Plus.

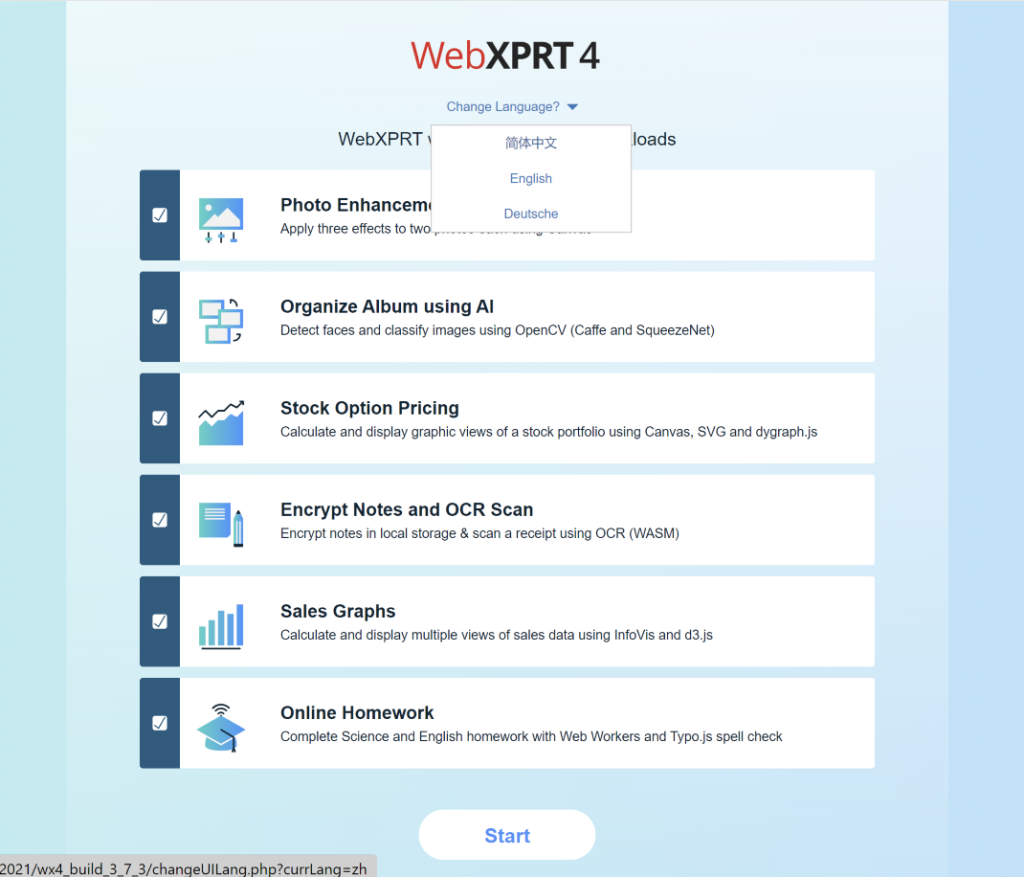

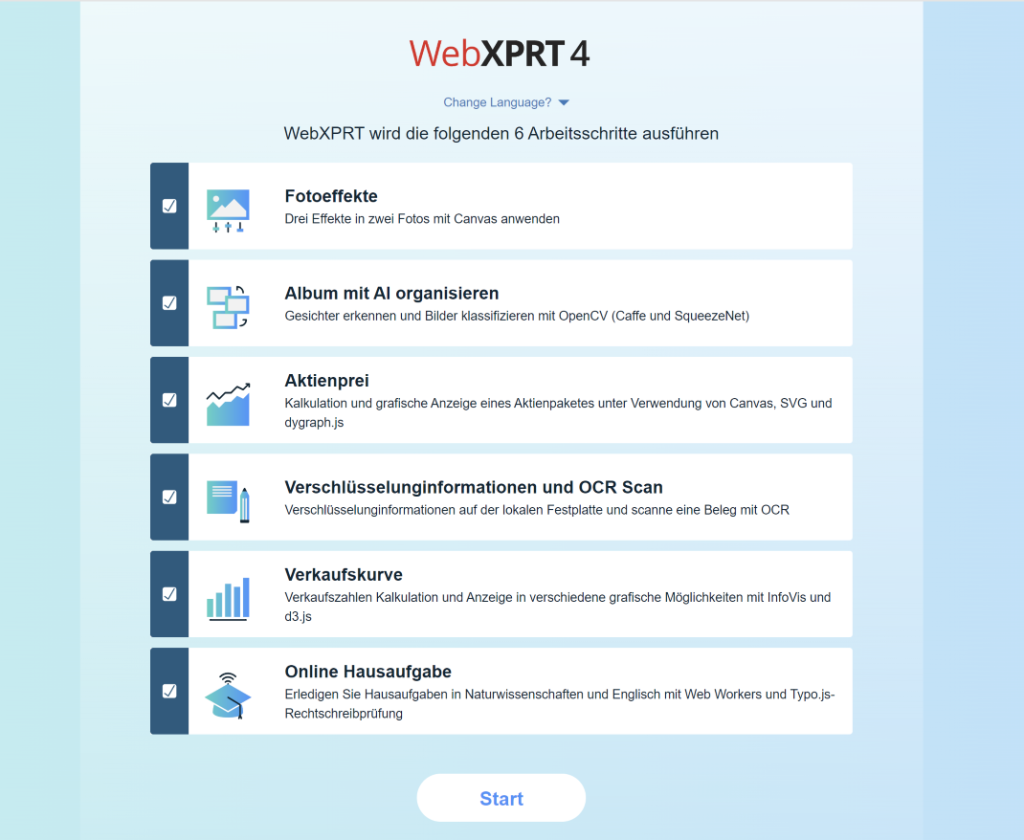

- PConline (China) used WebXPRT 4’s Simplified Chinese language option while evaluating the Apple MacBook Pro 14 2024.

- Laptop Mag used CrXPRT 2 and WebXPRT 4 to generate data for an article titled Best touchscreen laptops in 2025: 7 tested and reviewed.

- PCWorld used CrXPRT 2 to review a range of Chromebooks for a Best Chromebooks 2025: Best overall, best battery life, and more article.

- Notebookcheck used WebXPRT 4 in dozens of device reviews, including evaluations of the HP Envy x360 15, Razer Blade 16 2025, Apple MacBook Air 15 M4, Samsung Galaxy S25 Ultra, and Microsoft Surface Laptop 7 15.

- Other outlets that have published articles, ads, or reviews mentioning the XPRTs in the last few months include the following: 3DNews.ru (Russia), Acer, Alza.cz (Czech Republic), Android Headlines, Android.com.pl (Poland), BenchLife.info, ComputerBase (Germany), Dell, DGL.ru (Russia), eTeknix, Gadgety (Israel), GeekWeek (Poland), GSMArena.com, ID.nl (Netherlands), Intel, ITC.ua (Ukraine), ITMedia (Japan), Komputronik (Poland), Mashable, MSN, PC Games Hardware (Germany), PCMag, PurePC.pl (Poland), QQ.com (China), SlashGear, Sohu.com (China), TechHut, TechRadar, TechToday (Ukraine), Tom’s Hardware, Tool Elvaliant (Italy), Tweakers, and ZDNet, among others.

If you’d like to receive monthly updates on XPRT-related news and activity, we encourage you to sign up for the BenchmarkXPRT Development Community newsletter. It’s completely free, and all you need to do to join the newsletter mailing list is let us know! We won’t publish, share, or sell any of the contact information you provide, and we’ll only send you the monthly newsletter and occasional benchmark-related announcements, such as important news about patches or releases.

If you have any questions about the XPRTs, suggestions, or requests for future blog topics, please feel free to contact us.

Justin