Each new XPRT benchmark release attracts new visitors to our site. Those who haven’t yet run any of our benchmarks may not know how everything works. For those folks, as well as longtime testers who may not be aware of everything the XPRTs have to offer, we like to occasionally revisit the basics here in the blog. Today, we cover the simple process of submitting WebXPRT 4 test results for publication in the WebXPRT 4 results viewer.

Unlike sites that publish all results that users submit, we publish only results—from internal lab testing, user submissions, and reliable tech media sources—that meet our evaluation criteria. Scores must be consistent with general expectations and, for sources outside of our lab, must include enough detailed system information that we can determine whether the score makes sense. Every score in the WebXPRT results viewer and on the general XPRT results page meets these criteria.

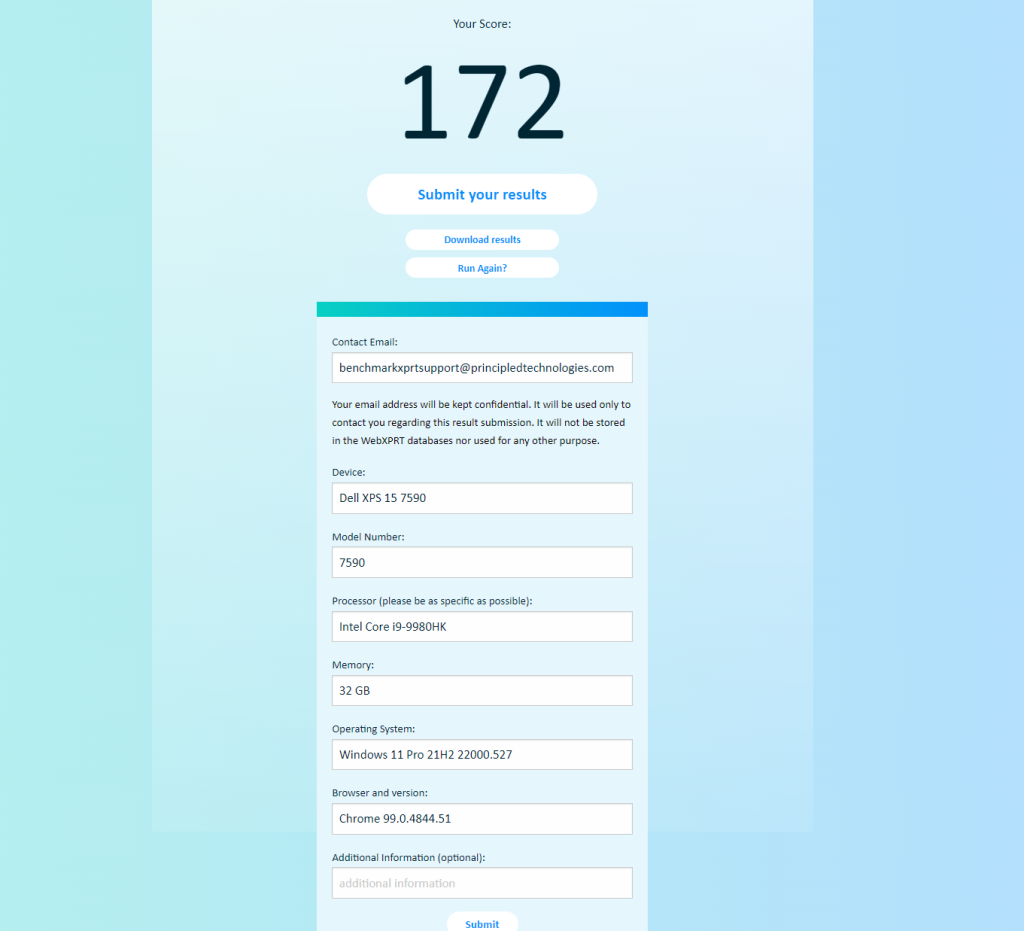

Everyone who runs a WebXPRT 4 test is welcome to submit scores for us to consider for publication. The process is quick and easy. At the end of the WebXPRT test run, click the Submit your results button below the overall score, complete the short submission form, and click Submit again. Please be as specific as possible when filling in the system information fields. Detailed device information helps us assess whether individual scores represent valid test runs. The screenshot below shows how the form would look if I submitted a score at the end of a WebXPRT 4 run on my personal system.

After you submit your score, we’ll contact you to confirm how we should display the source. You can choose one of the following:

- Your first and last name

- “Independent tester” (for those who wish to remain anonymous)

- Your company’s name, provided that you have permission to submit the result in their name. If you want to use a company name, please provide a valid company email address.

We will not publish any additional information about you or your company without your permission.

We look forward to seeing your score submissions. If you have suggestions for the WebXPRT 4 results viewer or any other aspect of the XPRTs, let us know!

Justin