It’s been just over a year since we launched the XPRT Weekly Tech Spotlight by featuring our first device, the Google Pixel C. Spotlight has since become one of the most popular items at BenchmarkXPRT.com, and we thought now would be a good time to recap the past year, offer more insight into the choices we make behind the scenes, and look at what’s ahead for Spotlight.

The goal of Spotlight is to provide PT-verified specs and test results that can help consumers make smart buying decisions. We try to include a wide variety of device types, vendors, software platforms, and price points in our inventory. The devices also tend to fall into one of two main groups: popular new devices generating a lot of interest and devices that have unique form factors or unusual features.

To date, we’ve featured 56 devices: 16 phones, 11 laptops, 10 two-in-ones, 9 tablets, 4 consoles, 3 all-in-ones, and 3 small-form-factor PCs. The operating systems these devices run include Android, ChromeOS, iOS, macOS, OS X, Windows, and an array of vendor-specific OS variants and skins.

As much as possible, we test using out-of-the-box (OOB) configurations. We want to present test results that reflect what everyday users will experience on day one. Depending on the vendor, the OOB approach can mean that some devices arrive bogged down with bloatware while others are relatively clean. We don’t attempt to “fix” anything in those situations; we simply test each device “as is” when it arrives.

If devices arrive with outdated OS versions (as is often the case with Chromebooks), we update to current versions before testing, because that’s the best reflection of what everyday users will experience. In the past, that approach would’ve been more complicated with Windows systems, but the Microsoft shift to “Windows as a service” ensures that most users receive significant OS updates automatically by default.

The OOB approach also means that the WebXPRT scores we publish reflect the performance of each device’s default browser, even if it’s possible to install a faster browser. Our goal isn’t to perform a browser shootout on each device, but to give an accurate snapshot of OOB performance. For instance, last week’s Alienware Steam Machine entry included two WebXPRT scores, a 356 on the SteamOS browser app and a 441 on Iceweasel 38.8.0 (a Firefox variant used in the device’s Linux-based desktop mode). That’s a significant difference, but the main question for us was which browser was more likely to be used in an OOB scenario. With the Steam Machine, the answer was truly “either one.” Many users will use the browser app in the SteamOS environment and many will take the few steps needed to access the desktop environment. In that case, even though one browser was significantly faster than the other, choosing to omit one score in favor of the other would have excluded results from an equally likely OOB environment.

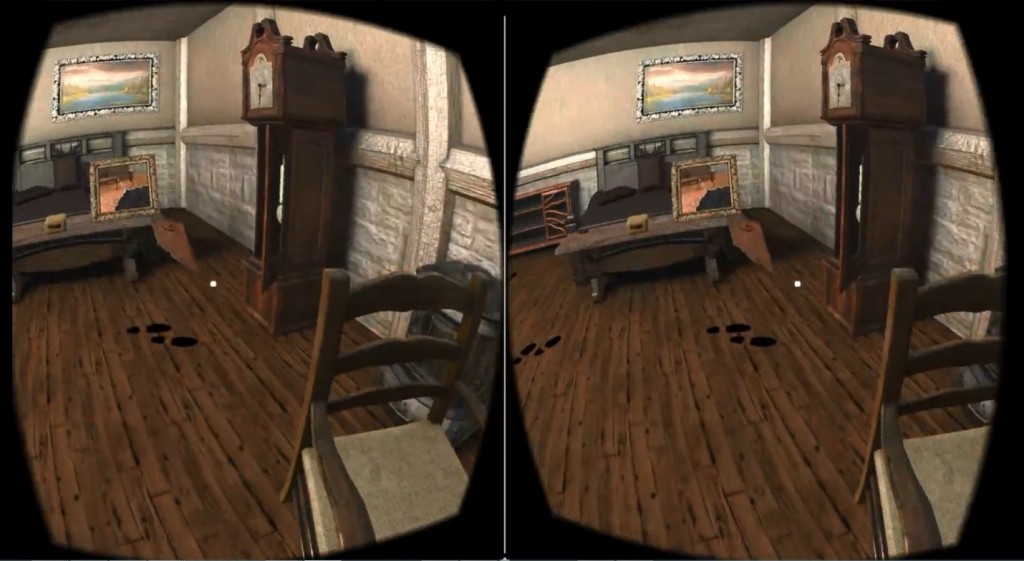

We’re always looking for ways to improve Spotlight. We recently began including more photos for each device, including ones that highlight important form-factor elements and unusual features. Moving forward, we plan to expand Spotlight’s offerings to include automatic score comparisons, additional system information, and improved graphical elements. Most importantly, we’d like to hear your thoughts about Spotlight. What devices and device types would you like to see? Are there specs that would be helpful to you? What can we do to improve Spotlight? Let us know!

Justin