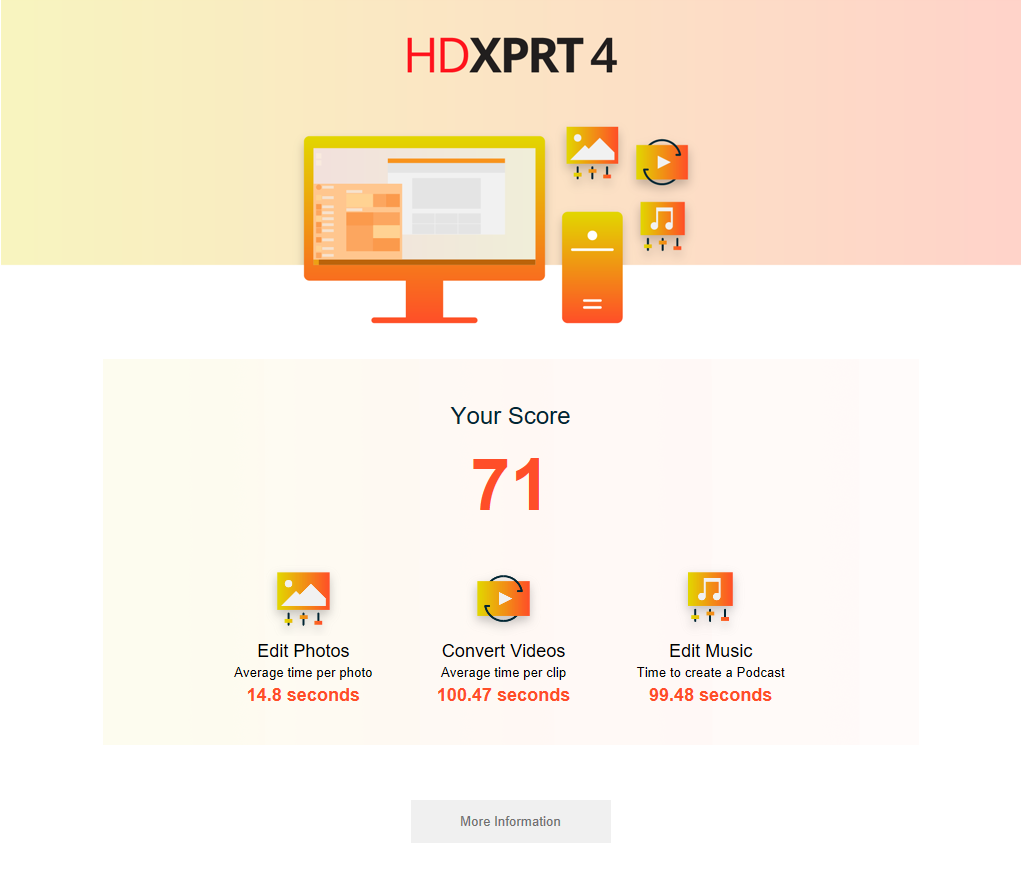

This week, we’re sharing a little more about the upcoming HDXPRT 4 Community Preview. Just like previous versions of HDXPRT, HDXPRT 4 will use trial versions of commercial applications to complete workload tasks. We will include installers for some of those programs, such as Audacity and HandBrake, in the HDXPRT installation package. For other programs, such as Adobe Photoshop Elements 2018 and CyberLink Media Espresso 7.5, users will need to download the necessary installers prior to testing using links and instructions that we will provide. The HDXPRT 4 installation package is just over 4.7 GB, slightly smaller than previous versions.

I can also report that the new version requires fewer pre-test configuration steps and a full test run takes much less time than before. Some systems that took over an hour to complete an HDXPRT 2014 run are completing HDXPRT 4 runs in about 25 minutes.

We’ll continue to provide more information as we get closer to releasing the community preview. If you’re interested in testing with HDXPRT 4 before the general release but have not yet joined the community, we invite you to join now. If you have any questions or comments about HDXPRT or the community, please contact us.

Justin