February 28, 2013 was a momentous day for the BenchmarkXPRT Development Community. On that day, we published a press release announcing the official launch of the first version of the WebXPRT benchmark, WebXPRT 2013. As difficult as it is for us to believe, the 10-year anniversary of the initial WebXPRT launch is in just a few short months!

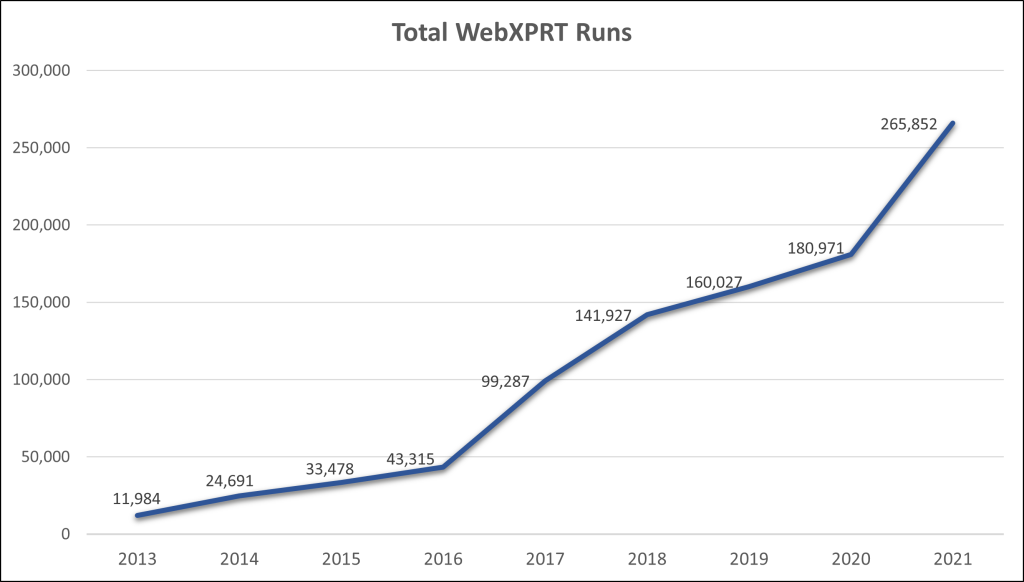

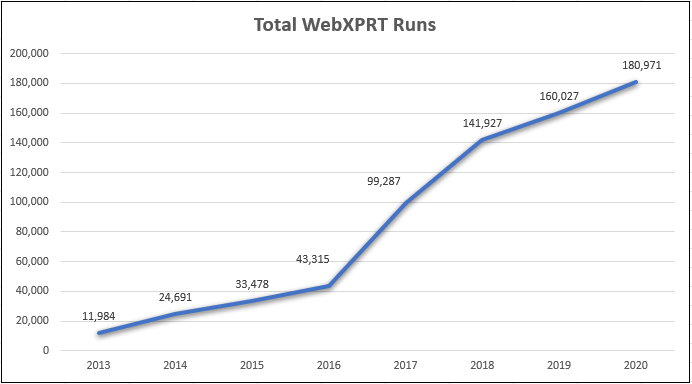

We introduced WebXPRT as a truly unique browser performance benchmark in a field that was already crowded with a variety of measurement tools. Since those early days, the WebXPRT market presence has grown from a small foothold into a worldwide industry standard. Over the years, hundreds of tech press publications have used WebXPRT in thousands of articles and reviews, and the WebXPRT completed-runs counter rolled over the 1,000,000-run mark.

New web technologies are continually changing the way we use the web, and browser-performance benchmarks should evaluate how well new devices handle the web of today, not the web of several years ago. While some organizations have stopped development for other browser performance benchmarks, we’ve had the opportunity to continue updating and refining WebXPRT. We can look back at each of the four major iterations of the benchmark—WebXPRT 2013, WebXPRT 2015, WebXPRT 3, and WebXPRT 4—and see a consistent philosophy and shared technical lineage contributing to a product that has steadily improved.

As we get closer to the 10-year anniversary of WebXPRT next year, we’ll be sharing more insights about its reach and impact on the industry, discussing possible future plans for the benchmark, and announcing some fun anniversary-related opportunities for WebXPRT users. We think 2023 will be the best year yet for WebXPRT!

Justin