We recently received a question from member of the tech press about whether we would be willing to supply them with the WebXPRT 4 source code, along with instructions for how to set up a local instance of the benchmark for their internal testbed. We were happy to help, and they are now able to automate WebXPRT 4 runs within their own isolated network.

If you’re a new XPRT tester, you may not be aware that we provide free access to the source code for each of the XPRT benchmarks. Publishing XPRT source code is part of our commitment to making the XPRT development process as transparent as possible. By allowing all interested parties to access and review our source code, we’re encouraging openness and honesty in the benchmarking industry and are inviting the kind of constructive feedback that helps to ensure that the XPRTs continue to contribute to a level playing field.

While XPRT source code is available to the public, our approach to derivative works differs from some open-source models. Traditional open-source models encourage developers to change products and even take them in different directions. Because benchmarking requires a product that remains static to enable valid comparisons over time, we allow people to download the source, but we reserve the right to control derivative works. This discourages a situation where someone publishes an unauthorized version of the benchmark and calls it an “XPRT.”

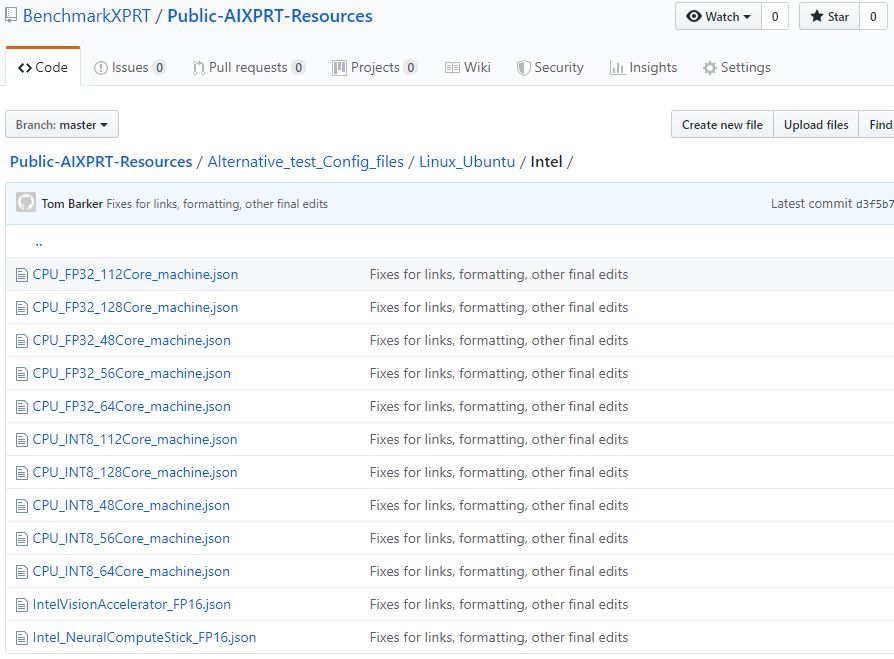

Accessing XPRT source code is a straightforward process. The source code for CloudXPRT is freely available in our CloudXPRT GitHub repository. If you’d like to download and review the source code for WebXPRT 4 or any of the other XPRTs, or get instructions for how to build one of the benchmarks, all you need to do is contact us at benchmarkxprtsupport@principledtechnologies.com. Your feedback is valuable!

Justin